Unlock the power of GPU-accelerated computing without the burden of upfront infrastructure investments. Our AI Infra-as-a-Service delivers on-demand, high-performance GPU clusters that integrate seamlessly with leading cloud providers like AWS, Azure, GCP, and Oracle – giving you the flexibility to scale your AI workloads anytime, anywhere.

Key Features

⚡ On-Demand GPU Infrastructure

- Instantly access GPU-powered compute clusters for training, inferencing, and data-intensive workloads.

- Scale up or down based on workload requirements, without capacity constraints.

🔗 Seamless Multi-Cloud Integration

- Natively integrates with AWS, Azure, GCP, and Oracle Cloud.

- Hybrid-ready architecture ensures smooth workload migration across environments.

🧠 Optimized for AI & LLMs

- Purpose-built for Large Language Model (LLM) training, fine-tuning, and inferencing.

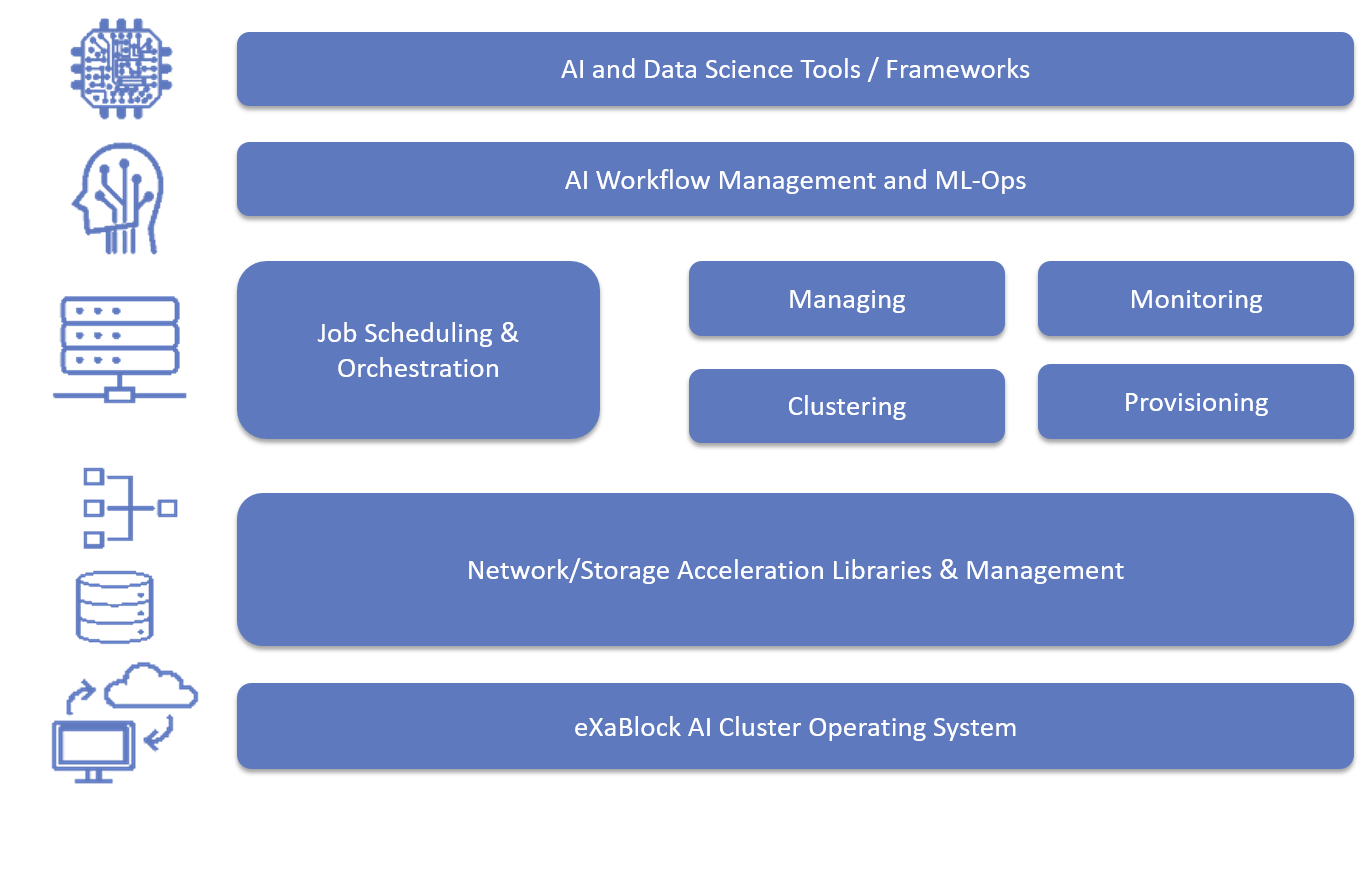

- Supports end-to-end AI pipelines including data preprocessing, model training, and deployment.

💳 Flexible Pay-As-You-Go Model

-

- No upfront capital expenditure – pay only for the

- resources you use.

- Transparent pricing and usage-based billing for cost control.

Benefits

- 🚀 Accelerate AI Innovation – access GPU compute instantly without long procurement cycles.

- 💰 Reduce Costs – eliminate heavy upfront investments with a consumption-based model.

- 🔒 Enterprise-Grade Security – compliant, secure, and reliable infrastructure for sensitive workloads.

- 🌍 Global Scalability – deploy workloads closer to your users across multiple geographies.

- ⚙️ Operational Flexibility – seamlessly switch between cloud and on-premises as per business needs.